Your Complete AI Post

Training Operating System

LH2 brings together verified domain experts and purpose-built automation, delivering training data with the quality and scale enterprise AI demands

Platform Capabilities

The annotation infrastructure behind production-grade AI training data

Multi-modal Annotation

Structured annotation pipelines across text, image, audio, video, and code with modality-specific tooling and quality controls.

Configurable Annotation Workflows

Fully configurable task flows, review layers, and acceptance criteria to match your data specification exactly.

Built-in Quality Tools

Linters, compilers, and LLM validators embedded at every annotation step to catch errors before they propagate.

Expert Routing & Skill-Based Matching

Task assignment driven by domain expertise, skill taxonomy, and contributor performance history.

Real-Time Quality Dashboards

Granular visibility into task throughput and dataset quality along with project progress and any identified risks.

API Access for Pipeline Integration

RESTful API access to programmatically trigger jobs, pull annotations, and integrate directly into your training infrastructure.

LH2 Expert Network

500+

Active experts

38%

PhD-level or equiv.

20+

Domains covered

94.2

Avg. quality score

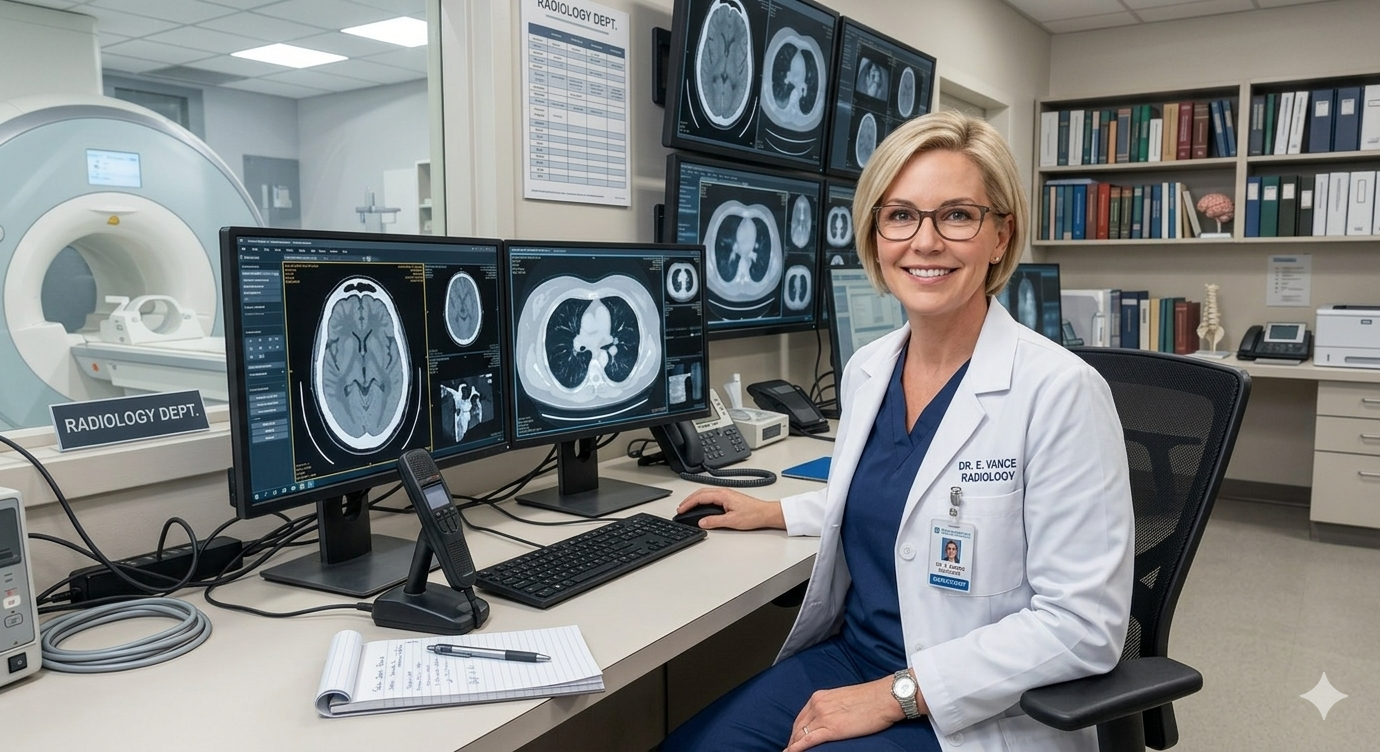

Senior Radiologist

15+ yrs clinical practice · MD, Radiology

Medical imaging

Clinical NLP

Principal Software Engineer

10+ yrs production eng. · Ex-FAANG

Code review

Code-gen eval

Manufacturing Engineer

15+ years of supply chain experience

Quality control

Process optimization

How experts are assessed

Credential Verification - Degrees, publications, and professional affiliations independently verified before onboarding

Domain Assessment - Structured test tasks conducted at start of every live project to establish a verified baseline quality score

Continuous Evaluation - Inter-annotator monitoring on every active project with quality enforced at the task level

Performance Routing - Every task assigned based on domain score, skill taxonomy, and historical accuracy

Experts by domain

Medical & Life Sciences

Software Development

Engineering

Finance

Quality Framework

Gold Labels

Pre-validated reference annotations embedded into live task queues to benchmark contributor accuracy. Every contributor is continuously measured against gold standard responses to ensure quality is enforced at the source.

Inter-Annotator Agreement

Every task is independently annotated by multiple contributors, with agreement scores calculated to surface inconsistency before it compounds. High-disagreement tasks are automatically flagged for expert review.

Random Sampling

Statistical sampling protocols applied across every batch to validate dataset quality without full manual review.

Fraud Detection

Behavioral and pattern-based signals monitored across every session to identify and remove bad-faith contributors in real time. Combines automated anomaly detection with expert review to protect dataset integrity.

Integration

Pipeline Integration

End to end integration across your existing ML stack from data ingestion to training-ready delivery, without rebuilding your pipeline. RESTful API and webhook triggers to let your team programmatically manage jobs, pull annotations, and automate handoffs.

Formats & Delivery

All datasets delivered in your required format - JSON, JSONL, CSV, COCO, CoNLL, With full lineage, compliance metadata, and version control included. Supports both on-demand delivery and scheduled batch exports.